🤖 Micrograd Cpp

A tiny scalar-valued autograd engine and neural network stack in C++, built to understand automatic differentiation and low-level deep learning mechanics.

Rebuild a minimal autograd and neural network system from first principles in C++ to better understand how modern deep learning frameworks work under the hood.

Implement the computational graph, scalar value operations, and reverse-mode autodiff manually, then validate the core engine with a simple neural network stack.

Turned a foundational ML learning exercise into a reusable systems-oriented project that demonstrates strong grasp of autodiff, backpropagation, and clean C++ implementation.

Solo builder

- AI

- ML

- Machine Learning

- C++

What this project is

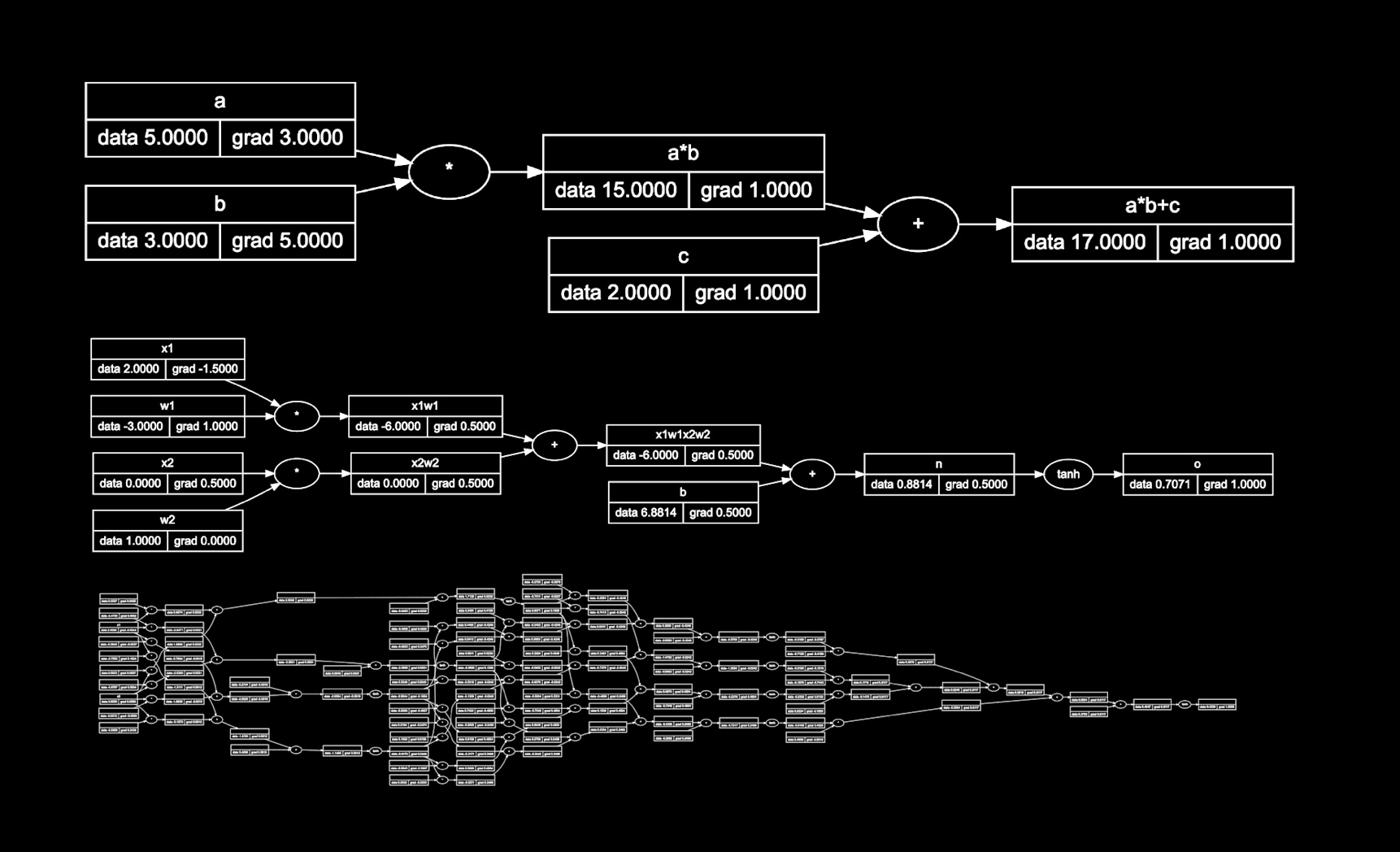

micrograd-cpp is my C++ reimplementation of the ideas behind Karpathy's micrograd: a compact scalar-valued autograd engine with a neural network built on top of it. The goal was not just to reproduce behavior, but to use the project as a systems-level exercise in how automatic differentiation actually works.

Problem I wanted to solve

Modern deep learning frameworks make gradient computation feel effortless, but that abstraction can hide the mechanics that matter when reasoning about model behavior, performance, or debugging. I wanted a small codebase where every value, graph edge, and backward pass step is understandable.

What I built

- A scalar-based value object that tracks data, gradients, and graph dependencies.

- Forward graph construction that captures the operations needed for backpropagation.

- Reverse-mode autodiff for computing gradients across composed expressions.

- A small neural network layer on top to validate the core engine end-to-end.

Technical choices

- I used C++ to stay close to memory and implementation details rather than relying on a higher-level numerical stack.

- I kept the engine intentionally minimal so the graph construction and backward pass stay inspectable.

- I treated the project as both a learning artifact and a code quality exercise: readable abstractions, manageable interfaces, and clear control over the computational graph.

Why it matters

This project is valuable because it demonstrates understanding rather than just library usage. For applied AI roles, I think that matters. It shows I can reason from first principles, move between theory and implementation, and build small but meaningful systems that expose core ML mechanics.

What I would improve next

- Add tensor support beyond scalar values.

- Benchmark memory and runtime tradeoffs against more optimized implementations.

- Expand test coverage around graph correctness and gradient stability.

- Add richer examples that move from toy networks toward more realistic training loops.